Built-in research bridge

Brain response preview for your pages

The internet is buzzing about how AI can model human neural responses to what we see and hear. Meta’s TRIBE v2 is the headline research direction: a trimodal encoder that predicts brain-like activity from video, audio, and text. Motivd gives founders a practical on-ramp: explore the science, compare Motivd and founder experiences, and plug in an optional inference worker from Admin → Health.

Why this moment matters

Product teams already A/B test pixels and copy. The next frontier is understanding whether an interface is likely to overload attention or feel coherent before you ship — especially for onboarding, pricing, and dense dashboards.

TRIBE v2 does not replace user research. It is a computational lens: a research model trained on large-scale fMRI data so teams can reason about stimuli in silico. Motivd surfaces that story next to your real builder workflow so the hype stays grounded in what you can run today.

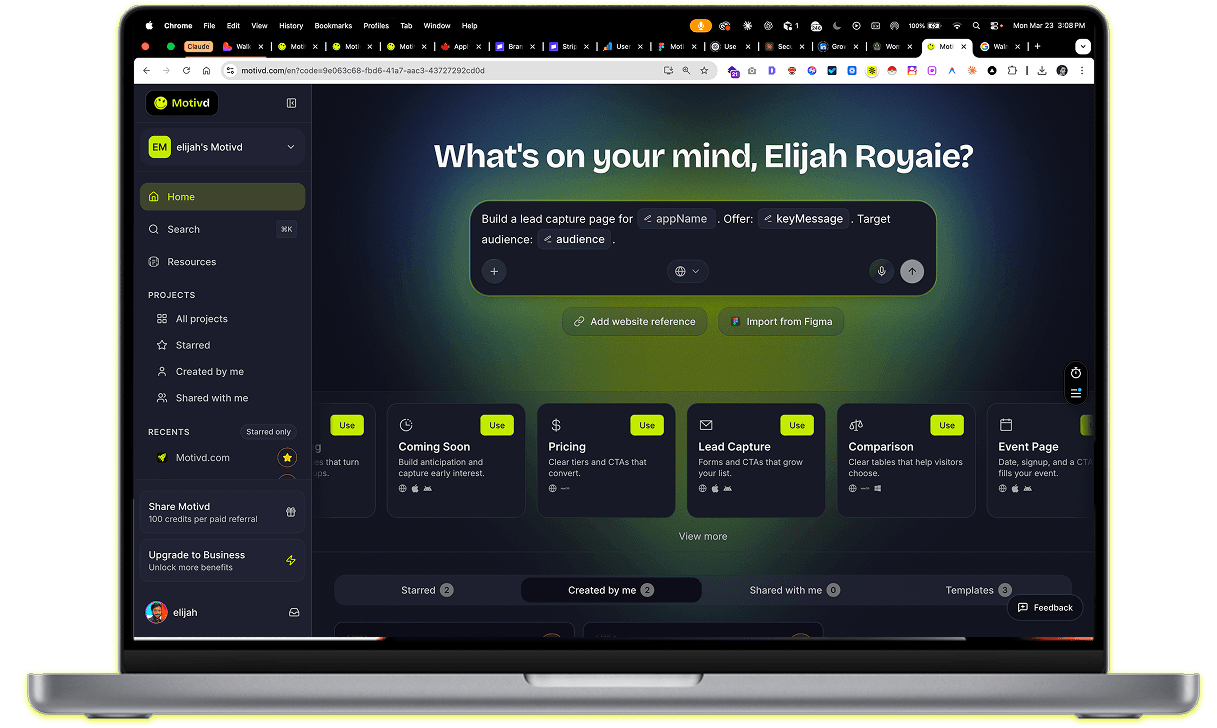

What you get with Motivd

- A public page (this one) that explains the bridge in plain language, with Motivd-made illustrations plus links to Meta’s blog and demo for official TRIBE v2 media.

- Admin → Health: upload a screenshot, label Motivd vs a founder app, and forward to your TRIBE v2 worker when configured (see docs/TRIBE_V2.md).

- Presets for Motivd routes so your team captures comparable frames across EN/FR and core funnels.

- Clear licensing context: TRIBE v2 weights are CC BY-NC — treat public claims and production use with care.

From UI capture to insight

Motivd in the loop

Your AI builder and device previews sit beside the research narrative — same stack you ship to Vercel and GitHub.

TRIBE v2 narrative (illustrated)

Meta’s announcement images are hosted on their CDN and often block hotlinking. We show Motivd illustrations here; open Meta’s post for the official figures and video.

For Meta’s own media assets, use the announcement and demo links above.

Motivd does not claim clinical or diagnostic use. TRIBE v2 outputs are research simulations, not medical advice.

Questions founders ask

Build something that matters

Real codebase, your pace: PRD-first alignment, build in Motivd Cloud, connect GitHub when you want. Chat with AI—made for founders.

How it works

- 1. Describe your idea

Tell us what you want to build or drop in screenshots and docs.

- 2. Spec then code

We draft a Product Requirements Document (PRD) so we're aligned, then we build your Next.js app on Vercel.

- 3. Ship it

Connect GitHub when you are ready, deploy in a click, and add your domain—or keep shipping from Motivd Cloud until then.